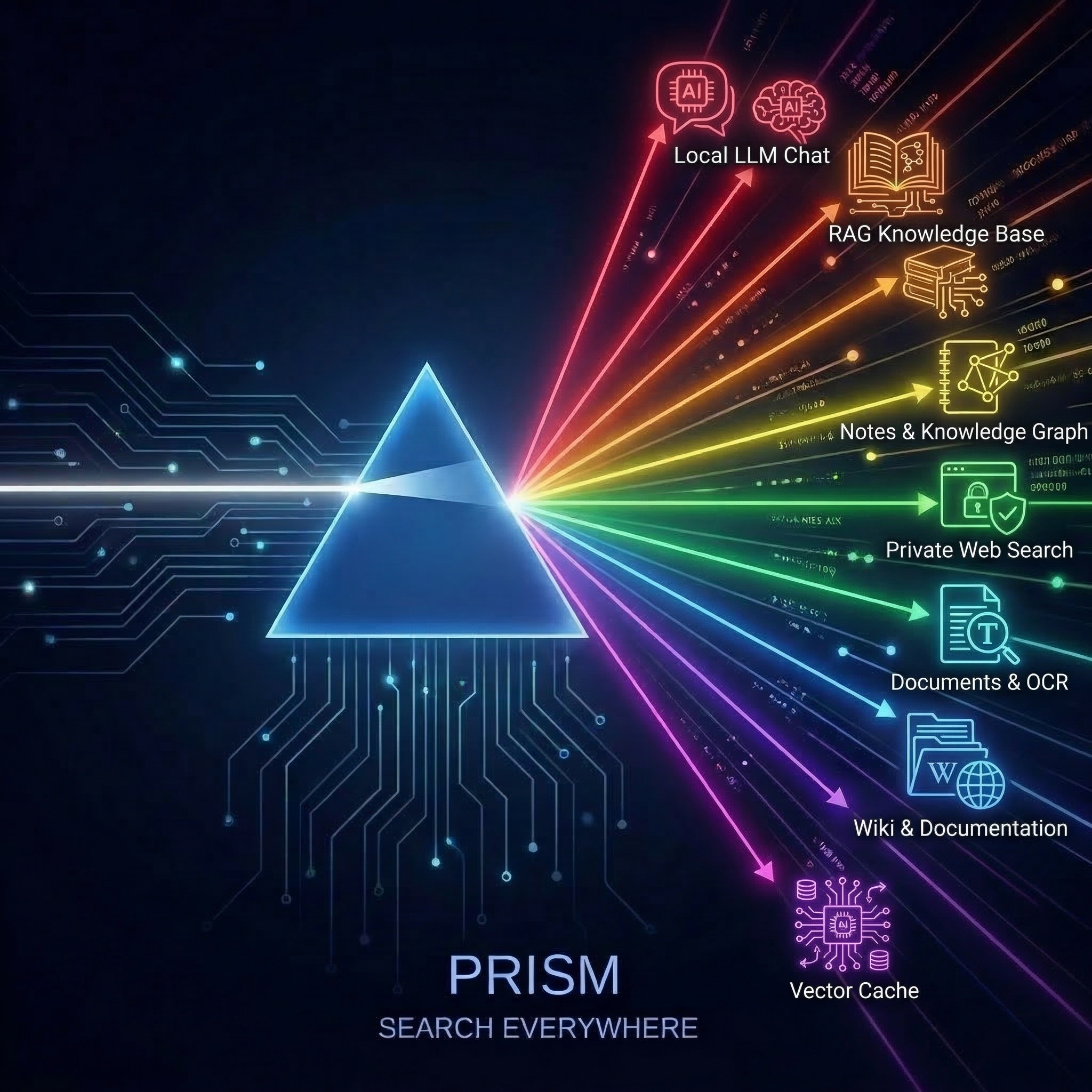

Search Everything.

Leak Nothing.

Pay Once.

Press Ctrl+Alt+Space to search Notion, Slack, Drive, Obsidian, and 14 more tools at once — powered by your own local LLM. No cloud round-trip. No subscription. Your data never leaves your machine.

No credit card or email required. Installs in seconds.

Stop Digging. Start Asking.

Information is scattered across Slack threads, Notion pages, local PDFs, and Google Drive. Prism bridges the gap. A single query searches all your tools at once, synthesizes the context, and hands you the exact answer — complete with clickable source citations.

Vector cache — instant semantic matches from previous searches

InstantScanned documents, receipts, PDFs with full OCR text

LiveWiki pages, shelves, chapters — your documentation hub

LiveMarkdown notes, daily journals, knowledge graph links

LiveSearXNG or Brave — private web results without tracking

LiveLocal LLM chat — ask questions, get AI answers on-device

LiveRAG knowledge base — answers grounded in your documents

LiveLocal LLM runner — pull and run models with one command

LiveDocs, Sheets, Slides — full-text search with your own credentials

LiveTrigger automations — run any workflow from the palette

LivePages, databases, wikis — search your entire workspace

LiveFull-text search across local documents, code, and text files

LiveIMAP inbox search — find messages without leaving the palette

LiveSearch channels and DMs — find conversations instantly

LiveSpaces, pages, blog posts — search your team knowledge base

LiveSelf-hosted cloud — search files, calendars, and contacts

LiveBYOK cloud AI — frontier models on your terms, your API keys

LiveQdrant cached results appear instantly. Live sources stream in as they complete.

Your Entire Digital Brain, One Keystroke Away

Not just search. Prism is a full AI command center that turns scattered knowledge into instant, cited answers — without ever leaving your machine.

Universal Search

One query, 18 sources, zero context switching. Search Slack, Notion, Google Drive, local files, and more in parallel. Results stream in with clickable citations so you always know where the answer came from.

Workflow Automation

Type > to trigger n8n workflows from the command palette. Automate repetitive tasks, trigger reports, and orchestrate your tools — all without leaving the keyboard.

Private AI Chat

Have streaming conversations with your local LLM — no data ever leaves your machine. Conversation branching lets you explore multiple lines of thought from any message, like a personal AI that actually stays personal.

Screenshot to Insight

Capture any region of your screen and instantly extract text with OCR. Feed it directly to your local AI for analysis, summarization, or translation — all without uploading a single pixel.

Smart Prompts

Type / to browse categorized prompt templates for coding, writing, analysis, and more. Skip the blank-page problem and get to work faster.

Always One Keystroke Away

Press Ctrl+Alt+Space (Windows/Linux) or Cmd+Option+Space (macOS) to summon Prism over any app. No window switching, no browser tabs. Your AI command center appears instantly, right where you’re working.

Your Knowledge, Organized

Every search is saved locally. Pin important results for quick recall. Your search history is yours alone — stored on your machine, never uploaded, always portable.

Deep Integrations

Fire webhooks on search, chat, or OCR events. Connect Prism to n8n, Zapier, or any HTTP endpoint to build powerful automations that fit your existing workflow.

What Happens on Your Machine, Stays on Your Machine.

Your legal briefs, financial reports, and internal wikis are never sent to OpenAI, Google, or Microsoft. Prism runs entirely on your hardware — every search, every chat, every AI response stays on your local disk. HIPAA-friendly by architecture, not by policy.

- No telemetry or usage analytics — ever

- All AI inference runs on your hardware — zero cloud calls

- No tracking pixels, no fingerprinting, no cookies

- Works fully offline — airplane mode ready

- All data stored in local SQLite — portable, inspectable, and yours

- Built on Tauri 2 + Rust — audit the code yourself

Connects to the Tools You Already Use

Stop tab-switching between 18 different apps. Prism plugs into your existing stack — self-hosted, local, and cloud — and searches them all from a single interface.

Paperless-ngx

Document management with OCR. Search scanned receipts, invoices, contracts, and any PDF in your archive.

Self-HostedBookStack

Self-hosted wiki platform. Search across shelves, books, chapters, and pages of your team documentation.

Self-HostedObsidian

Markdown-based knowledge base. Search notes, daily journals, and follow backlinks through your personal knowledge graph.

Local VaultConfluence

Enterprise wiki and team documentation. Search spaces, pages, and blog posts across your organization’s knowledge base.

Cloud / Data CenterNotion

Connected workspace for notes, docs, and databases. Search across pages, databases, and team wikis.

Cloud APIGoogle Drive

Search Docs, Sheets, Slides, and files across your Drive. Full-text search with your own OAuth credentials.

Cloud APINextcloud

Self-hosted cloud storage and collaboration. Search files, calendars, contacts, and notes on your own server.

Self-HostedLocal Files

Index and search files on your local disk. PDFs, text files, code, and documents — all searchable from the palette.

LocalEmail (IMAP)

Search your email inbox via IMAP. Find messages, attachments, and threads without opening a browser.

Self-Hosted / CloudSlack

Search channels, threads, and DMs across your Slack workspace. Find conversations without context-switching.

Cloud APISearXNG / Brave

Privacy-respecting web search. Get real web results without Google tracking. Self-host SearXNG or use Brave Search API.

Self-Hosted / APILM Studio

Run open-weight LLMs locally. Streaming chat, completions, and embeddings on your own GPU. Llama, Mistral, Phi, and more.

LocalOllama

Lightweight local LLM runner. Pull and run models with a single command. Native API for chat and embeddings.

LocalAnthropic / Claude

Bring your own API key for Claude. Use frontier models for complex reasoning while keeping queries on your terms.

BYOK CloudOpenAI-Compatible

Any OpenAI-compatible API endpoint. Works with GPT, Groq, Together, and self-hosted inference servers.

BYOK Cloud / LocalAnythingLLM

RAG-powered knowledge base. Upload documents and get AI answers grounded in your actual files. Fully self-hosted.

Self-HostedQdrant

High-performance vector database for semantic search. Caches embeddings from all sources for instant recall.

Self-Hostedn8n Workflows

Trigger any n8n workflow from the command palette. Pass parameters, receive results, automate your entire stack from one hotkey.

Self-HostedLighter Than a Browser Tab

Engineered natively in Rust, Prism opens in under 500 milliseconds and idles at just 30MB of RAM. AI that speeds up your computer instead of slowing it down.

30-day free trial. No credit card required. No account needed.

Pay Once. Own It Forever.

No subscriptions. No recurring fees. No per-seat monthly drain. One purchase gets you lifetime access and 12 months of free updates. Own your software again.

LAUNCH50 at checkout for 50% off — first 1000 buyers only.

Foundation - Up to 5 connectors

- Private local AI chat

- Full-text & semantic search

- Community support

- Unlimited connectors

- AI-powered document Q&A (RAG)

- Bring your own cloud AI keys

- Multi-step agent workflows

- Priority email support

- Everything in Professional

- API server & webhooks

- HIPAA & SOC2 compliance templates

- Full audit trail export

- Priority support & onboarding

Your Workday Deserves Better.

Stop losing 9 hours a week digging through tools for answers. Search everything, leak nothing, and own your AI forever.

Get launch pricing

Drop your email. 50% off while supplies last, 20% off after.

Coupon:

GitHub · Duncan Smith · Blog